For migration from VMware vSphere to Nutanix AHV, please use Nutanix Move (Free V2V tool, developed by Nutanix)

In two previous blog posts I described how to migrate Windows 2012R2 from VMware ESXi to Nutanix AHV and SUSE 11SP4 from VMware ESXi to Nutanix AHVHV

Today quick guide how to Migrate RHEL 6.5 from ESXi to Nutanix AHV. RHEL 6.5 is fairly modern operating system so there is not much work during migration.

Requirements:

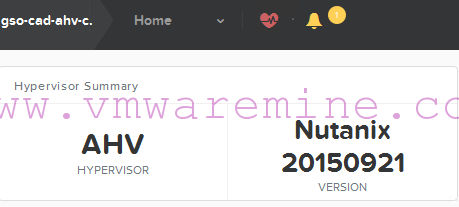

- AOS – 4.6.1.1 or newer

- AHV – 20160217.2 or newer

- vSphere 5.0 U2 or newer

- connectivity between legacy ESXi servers and Nutanix CVMs over NFS

- remove all snapshots from VM

Procedure:

- Check RHEL and kernel versions first

[root@rhel65 ~]# uname -r 2.6.32-431.el6.x86_64 [root@rhel65 ~]# cat /etc/redhat-release Red Hat Enterprise Linux Server release 6.5 (Santiago)

- Verify if you have virtIO modules present. As you can see – modules are present

[root@rhel65 ~]# grep -i virtio /boot/config-`uname -r` CONFIG_NET_9P_VIRTIO=m CONFIG_VIRTIO_BLK=m CONFIG_SCSI_VIRTIO=m CONFIG_VIRTIO_NET=m CONFIG_VIRTIO_CONSOLE=m CONFIG_HW_RANDOM_VIRTIO=m CONFIG_VIRTIO=m CONFIG_VIRTIO_RING=m CONFIG_VIRTIO_PCI=m CONFIG_VIRTIO_BALLOON=m [root@rhel65 ~]#

- Check if virtio modules are part of the initramfs. RHEL 6.5 has virtio drivers build it. If there is not output – no modules, meaning you have to create either new initrd or initramfs images.

[root@rhel65 ~]# zcat /boot/initramfs-2.6.32-431.el6.x86_64.img | cpio -it | grep virtio lib/modules/2.6.32-431.el6.x86_64/kernel/drivers/block/virtio_blk.ko lib/modules/2.6.32-431.el6.x86_64/kernel/drivers/scsi/virtio_scsi.ko lib/modules/2.6.32-431.el6.x86_64/kernel/drivers/virtio lib/modules/2.6.32-431.el6.x86_64/kernel/drivers/virtio/virtio.ko lib/modules/2.6.32-431.el6.x86_64/kernel/drivers/virtio/virtio_pci.ko lib/modules/2.6.32-431.el6.x86_64/kernel/drivers/virtio/virtio_ring.ko

- create new initrd with virtio modules – RHEL 5.X

[root@rhel65 ~]# mkinitrd --with="virtio_blk virtio_pci" -f -v /boot/initrd-`uname -r`.img `uname -r`

- create new initrd with virtio modules – RHEL 6.X

[root@rhel65 ~]# dracut --add-drivers "virtio_pci virtio_blk" -f -v /boot/initramfs-`uname -r`.img `uname -r`

- check new initrd (RHEL 6.X), virtio modules are there. we can stop server

[root@rhel65 ~]# zcat /boot/initramfs-`uname -r`.img | cpio -it | grep virtio lib/modules/2.6.32-431.el6.x86_64/kernel/drivers/block/virtio_blk.ko lib/modules/2.6.32-431.el6.x86_64/kernel/drivers/scsi/virtio_scsi.ko lib/modules/2.6.32-431.el6.x86_64/kernel/drivers/virtio lib/modules/2.6.32-431.el6.x86_64/kernel/drivers/virtio/virtio.ko lib/modules/2.6.32-431.el6.x86_64/kernel/drivers/virtio/virtio_pci.ko lib/modules/2.6.32-431.el6.x86_64/kernel/drivers/virtio/virtio_ring.ko 96407 blocks

Log in to Prism and create Virtual Machine. Remember to add vNIC and disks. Disks must be added in right order. When adding disks remember to choose below options:

- Operation: clone from NDFS file

- SCSI bus as source use

- VMware vSphere guest -flat vmdk file only.

Power new VM and launch console. Let’s fix networking.

- Remove below file and reboot server

[root@rhel65 ~]# rm -f /etc/udev/rules.d/70-persistent-net.rules

- Edit the same file and:

- note down MAC address

- change eth1 to eth0

# This file was automatically generated by the /lib/udev/write_net_rules

# program, run by the persistent-net-generator.rules rules file.

#

# You can modify it, as long as you keep each rule on a single

# line, and change only the value of the NAME= key.

# PCI device 0x1af4:0x1000 (virtio-pci)

SUBSYSTEM=="net", ACTION=="add", DRIVERS=="?*", ATTR{address}=="52:54:00:2a:43:c6", ATTR{type}=="1", KERNEL=="eth*", NAME="eth0"

- Edit network configuration file and:

- remove UUID

- change MAC address

[root@rhel65 ~]# vi /etc/sysconfig/network-scripts/ifcfg-eth0

DEVICE=eth0 TYPE=Ethernet ONBOOT=yes NM_CONTROLLED=yes BOOTPROTO=none HWADDR=52:54:00:2a:43:c6 IPADDR=10.4.92.22 PREFIX=24 GATEWAY=10.4.92.1 DNS1=10.4.89.111 DEFROUTE=yes IPV4_FAILURE_FATAL=yes IPV6INIT=no NAME="System eth0"

- restart networking services and you should have network up and running

[root@rhel65 ~]# service network restart Shutting down interface eth0: [ OK ] Shutting down loopback interface: [ OK ] Bringing up loopback interface: [ OK ] Bringing up interface eth0: Determining if ip address 10.4.92.22 is already in use for device eth0... [ OK ] [root@rhel65 ~]#

and VIDEO

Migration series

- Migrate Windows 2012R2 from Citrix XenServer to Nutanix AHV

- Migrate Suse Linux from VMware ESXi to Nutanix AHV in minutes

- Migrate Windows 2012R2 from Citrix XenServer to Nutanix AHV

Art, I tried this on a CentOS 6.6 VM originally running on ESXi. Most everything went ok but after restarting the network service I got “Device eth0 does not seem to be present…” when it tried to bring up that interface. Has something changed in AHV since you wrote this article? I will try restarting the VM on AHV next and see if that works.